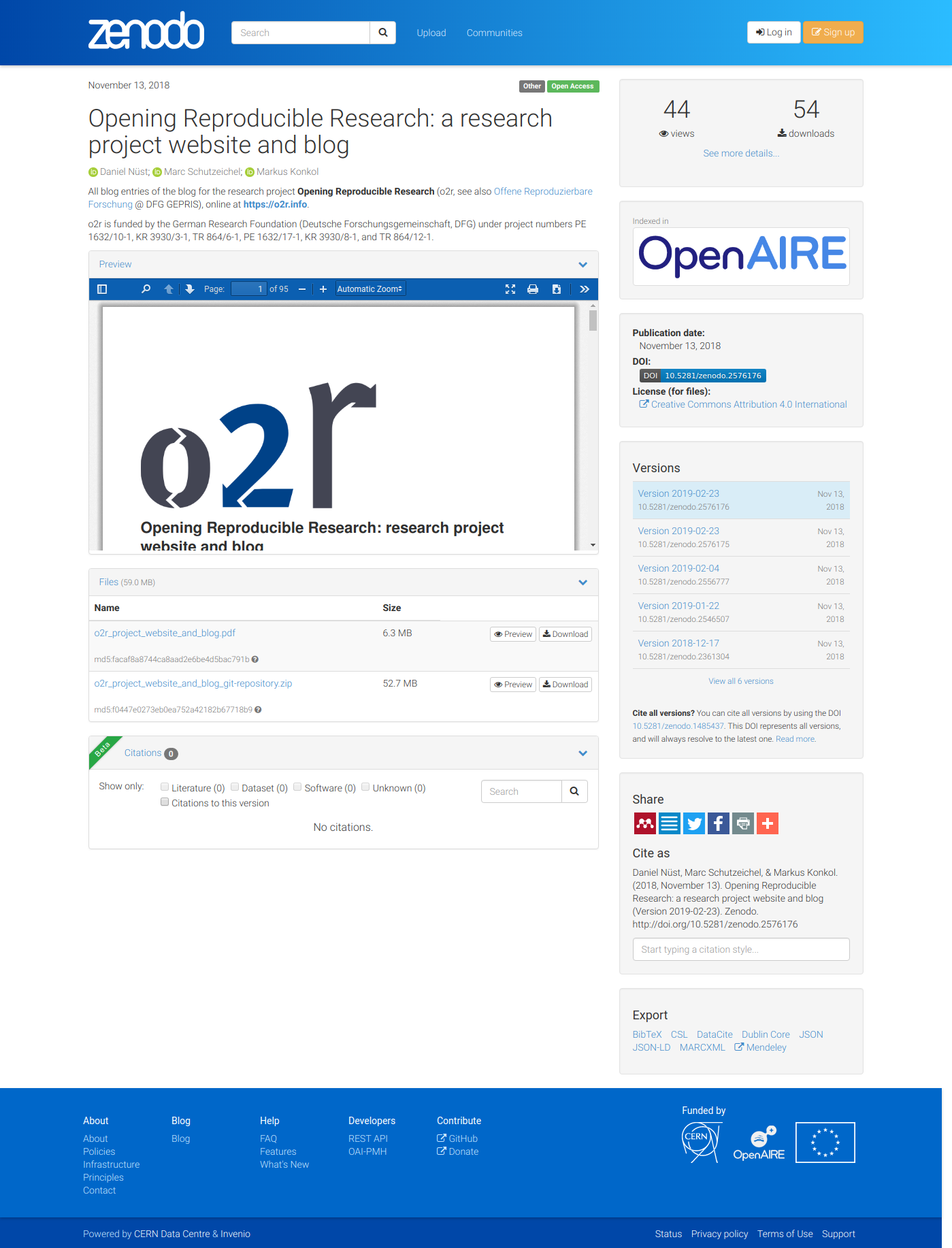

Archiving a Research Project Website on Zenodo

24 Feb 2019 | By Daniel NüstThe o2r project website’s first entry Introducing o2r was published 1132 days ago. Since then we’ve published short and long reports about events the o2r team participated in, advertised new scholarly publications we were lucky to have accepted in journals, and reported on results of workshops organised by o2r. But there has also been some original content from time to time, such as the extensive articles on Docker and R, which received several updates over the last years (some still pending), on the integration of Stencila and Binder, or on writing reproducible articles for Copernicus Publications. These posts are a valuable output of the project, and contribute to the scholarly discussion. Therefore, when it came to writing a report on the project’s activities and outputs, it was time to consider the preservation of the project website and blog. The website is built with Jekyll (with Markdown source files) and hosted with GitHub pages, but GitHub may disappear and Jekyll might stop working at some point.

So how can we archive the blog post and website in a sustainable way, without any manual interference?

Today’s blog post documents the steps to automatically deposit the sources, the HTML rendering, a PDF rendering, and the whole git repository in a new version of a Zenodo deposit with each new blog post using Zenodo’s DOI versioning. The PDF was especially tricky but is very important, because the format is established for archival of content, while using the Zenodo API was pretty straightforward. We hope the presented workflow might be useful for other websites and blogs in a scientific context. It goes like this:

- The Makefile target

update_zenodo_depositstarts the whole process withmake update_zenodo_deposit. It triggers several other make targets, some of which require two processes to run at the same time: - Remove previously existing outputs (“clean”).

- Build the whole page with Jekyll and serve it using a local web server.

- Create a PDF from the whole website from the local web server using

wkhtmltopdfand the special page /all_content, which renders all blog entries in a suitable layout together with an automatically compiled list of author names and all website pages, unless they are excluded from the menu (e.g. manual redirection/shortened URLs) or excluded from “all pages” (e.g. the 404 page, blogroll, or publications list). - Create a ZIP archive with the sources, HTML rendering and PDF capture.

- Run a Python script to upload the PDF and ZIP files to Zenodo using the Zenodo API, which includes several requests to retrieve the latest version metadata, check that there really is a new blog post, create a new deposit, remove the existing files in the deposit, upload the new files, and eventually publish the record.

- Kill the still running web server.

For these steps to run automatically, the Travis CI configuration file, the GitHub action configuration .travis.yml (link to commit where Travis CI configuration was removed in favour of…)deposit.yml, installs the required software environment to conduct all above steps during each change to the main branch.

A secure environment variable for the repository is used to store a Zenodo API key, so the build system can manipulate the record.

The first version of this record, including its description, authors, tags, etc., was created manually.

So what is possible now? As pointed out in the citation note at the bottom of pages and posts, the Digital Object Identifier (DOI) allows referencing the whole website or specific posts (via pages in the PDF) in scholarly publications. Manual archival from a local computer is still possible by triggering the same make target. As long as Zenodo exists, readers have access to all content published by the o2r research project on its website.

There are no specific next steps planned, but there’s surely room for improvement as the current workflow is pretty complex.

The post publication date is the trigger for a new version, so changes in a page such as About or in an existing post requires a manual triggering of the workflow (and commenting out the check for a new post) or wait for the next blog entry.

The created PDF could be made compliant with PDF/A.

The control flow could also be implemented completely in Python instead of using multiple files and languages; a Python module might even properly manage the system dependencies.

Though large parts of the process are not limited to pages generated with Jekyll (the capturing and uploading), it might be effectively wrapped in a standalone Jekyll Jekyll plugin, or a combination of a Zenodo plugin together with the (stale?) jekyll-pdf plugin?

Your feedback is very welcome!